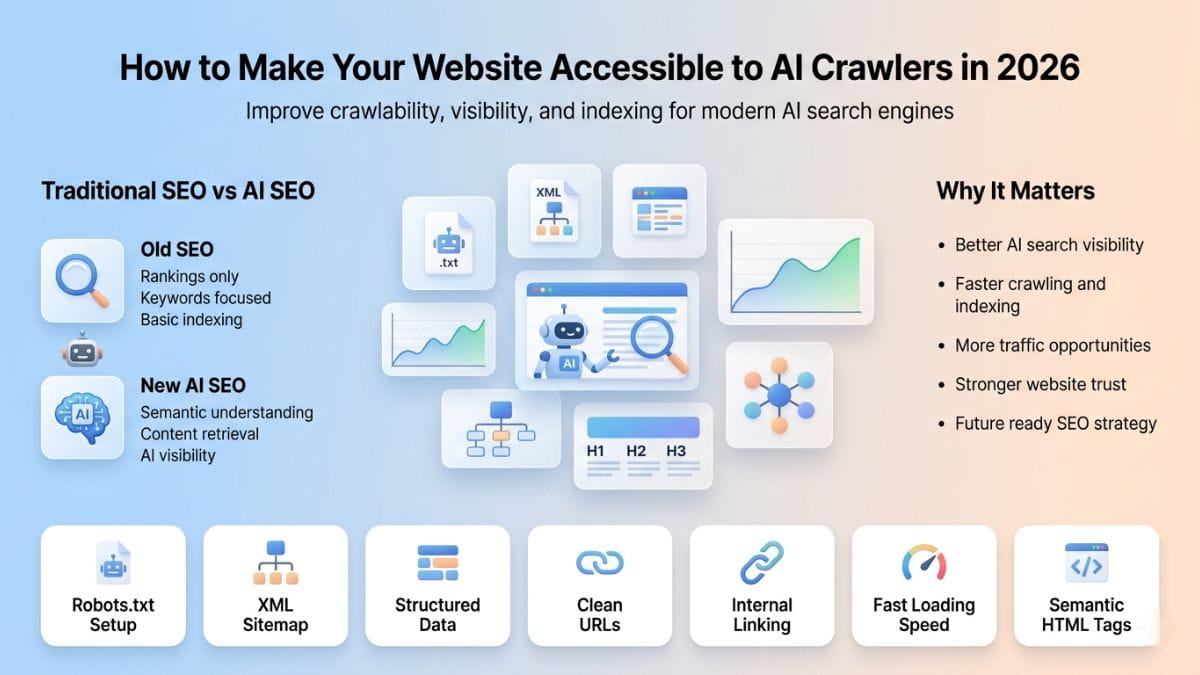

How to Make Your Website Fully Accessible to LLM Engines and AI Crawlers

Search visibility is no longer controlled only by traditional search engines. In 2026, large language models (LLMs), AI assistants, and autonomous crawlers increasingly read, interpret, and retrieve content directly from websites to generate answers, recommendations, and summaries.

If your site is not accessible to these systems, it risks becoming invisible—regardless of how strong your traditional rankings may be.

This guide explains how to make a genuinely AI friendly website, focusing on crawlability, structure, and technical SEO that matter for both humans and machines.

How do LLM engines and AI crawlers differ from traditional search engine crawlers?

Traditional search crawlers such as Googlebot are optimized around keyword matching and document-level ranking. They discover URLs, index pages, and evaluate relevance largely through lexical signals, links, and engagement metrics.

LLM engines and AI crawlers operate on a fundamentally different model. Instead of ranking pages, they perform semantic reasoning and synthesis, often using fan-out search—where a single prompt expands into multiple sub-queries that are evaluated and recombined into a final answer.

As a result, AI systems assess content through a meaning-first lens that changes how information is extracted and used:

read more..

Search for How to Make Your Website Fully Accessible to LLM Engines and AI Crawlers in the web..

Latest links

Website Info

Category: Digital marketingFound: 29.04.2026